Lots of us are finding our sea legs with our new friend the LLM – as we did 30 years ago with the explosion of the World Wide Web – wondering what kind of unknowable changes we are facing as a civilization.

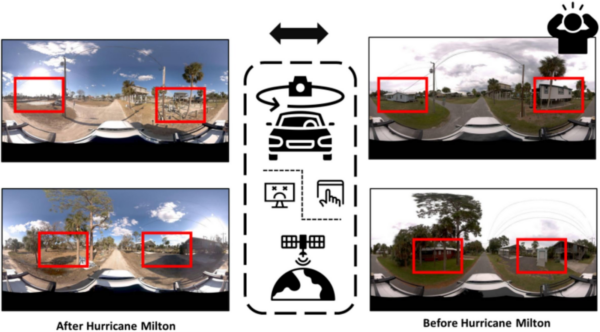

This article was originally published on LinkedIn by our Co-Founder and CEO, Jeffrey Martin. He also presented on the topic this week at 3Dise, a conference that focuses on 3D, immersive, and spatial experiences.

“World Model?” you say. “What is a world model?”

If you haven’t heard of it before, just note down the time and place and remember that today was when you first heard about world models.

Before we dive in, let’s talk about buzzwords…..

AGI. Social Engineering. Digital Twin. Deep Learning. Metaverse. Nanotechnology. Sustainable. Virtual Reality. Blockchain. Quantum. Information Superhighway. Big Data. Algorithm. Cloud Computing. Multimedia. Cybernetics. Plastics! Electronic brain. Synergy. Atomic. Pneumatic. Ether. Galvanism. Steam power!

Quick – all you cinema fans, can you tell me what buzzword this guy is saying?

The answer is “plastics,” of course! (The Graduate, 1967)

We just love buzzwords, don’t we? Buzzwords are the magic spark of hope injected into our incremental lives. Don’t get caught off-guard; there is a sea-change coming, something is swooping over the horizon, and it’s going to change everything.

Obviously, there are some inventions, ideas, or concepts that are interesting, important, relevant, salient, and also rather complicated and not easy for everyone to understand intuitively, especially when they are still new. Some of these things either fizzle out and don’t reach their potential (because they’re not that useful), like Blockchain, or NFT’s. Others become so absorbed into our reality that children literally can’t understand what people possibly did without it (the internet, electricity).

Today, in March 2026, lots of us are finding our sea legs with our new friend the LLM – as we did 30 years ago with the explosion of the World Wide Web – wondering what kind of unknowable changes we are facing as a civilization.

As always, it’s fundamentally easier to imagine what will break over what will be created (so it’s easier to be scared than excited), and it is also very difficult or impossible to imagine the second and third order effects of things that will be invented on top of the underlying technology – think about teenagers’ ambivalent feelings towards social media, for example.

Anyway, I’m not here to talk about how LLMs are going to replace lawyers. Instead, I’m here to talk about how World Models are going to replace pizza delivery people. 😉 I’m going to define what a World Model is, why world models are fascinating, and how they tie into some of the greatest mysteries of science and human knowledge, and even get to the very core of what it means to be an embodied creature.

What is a World Model?

The term “world model” has roots in neuroscience. Which, to be clear, is an extremely difficult field, because we STILL don’t know what is really going on in the brain, exactly. We’re still arguing about whether it’s neurons or synapses that are the unit of computation, for example. That is to say, neuroscience is deeply in bed with the philosophy department on one side, while the machine learning people lick their lips in anticipation of what they might infer for their machines.

Anyway, for neuroscientists, a world model is the brain’s internal, generative representation of the causal structure of the external environment. In simpler terms, we generate an idea of how the world works in order to function within it. The idea traces back to Hermann von Helmholtz in the 1860’s. Helmholtz’s concept of unbewusste Schlüsse: “unconscious inference.”

From there, Kenneth Craik in 1943 proposed that the brain constructs “small-scale models” of reality: internal representations that mirror the relational structure of the external world, and uses these models to simulate possible events, evaluate actions, and anticipate outcomes. Generally, people credit Craik with the first explicit formulation of this “world model” idea.

After this, we are in the post-war boom and the birth of computers. The computer programmers and control engineers are joining the party, trying to figure out how to control machines with something more than a PID controller. They came up with two complementary ideas: “forward models” and “inverse models.”

A forward model is a prediction machine. Given what I’m doing right now, what will happen next? If I push this cup, where will it slide? If I turn the steering wheel this much, how will the car respond? The brain runs these predictions constantly, and critically, it runs them faster than the sensory feedback actually arrives – which it has to, because neural signals are slow. This is why you can catch a ball without consciously computing a parabolic trajectory. Your forward model is already simulating where the ball will be before your eyes have confirmed it. But remember: you weren’t born knowing how to catch. You built that model by watching and interacting with thousands of moving objects over the course of your childhood, each one being a piece of “training data”.

An inverse model runs the computation backward: given where I want to end up, what do I need to do to get there? I want my hand on that coffee cup – what muscle activations will make that happen? It takes a desired outcome and works backward to the required action.

These two work as a team. The inverse model proposes a plan, the forward model predicts what will happen if you execute it, and the difference between the prediction and what actually happens – the prediction error – is used to refine both models. This is why your first attempt with an unfamiliar tool feels clumsy, but within a few tries, you’ve recalibrated. Your models are updating in real time. You might also notice that with a richer and more varied background in something, it is easier to learn/master it – this, again, is thanks in large part to your training data: everything you’ve ever done, seen, heard, and touched”.

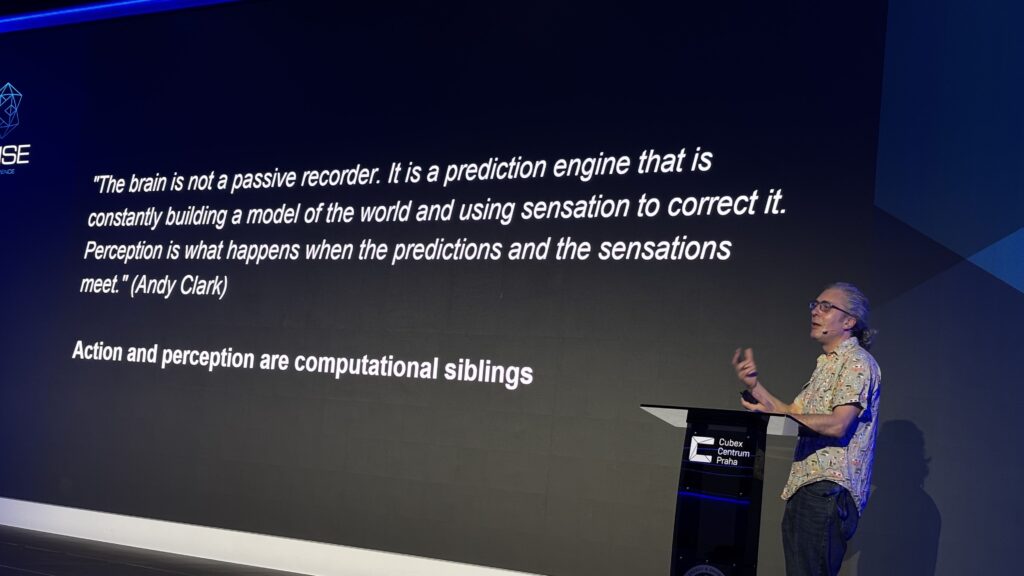

By the mid-2000s, we had Karl Friston’s “free energy principle,” which proposes explicitly that the brain uses a hierarchical generative model, where perception, action, understanding, and learning are all a single self-reinforcing flywheel upon which we can move our bodies, discover, understand, and learn. This concept has been refined by Andy Clark over the past ten years and dubbed “predictive processing,” which you can think of as a flywheel of action and perception.

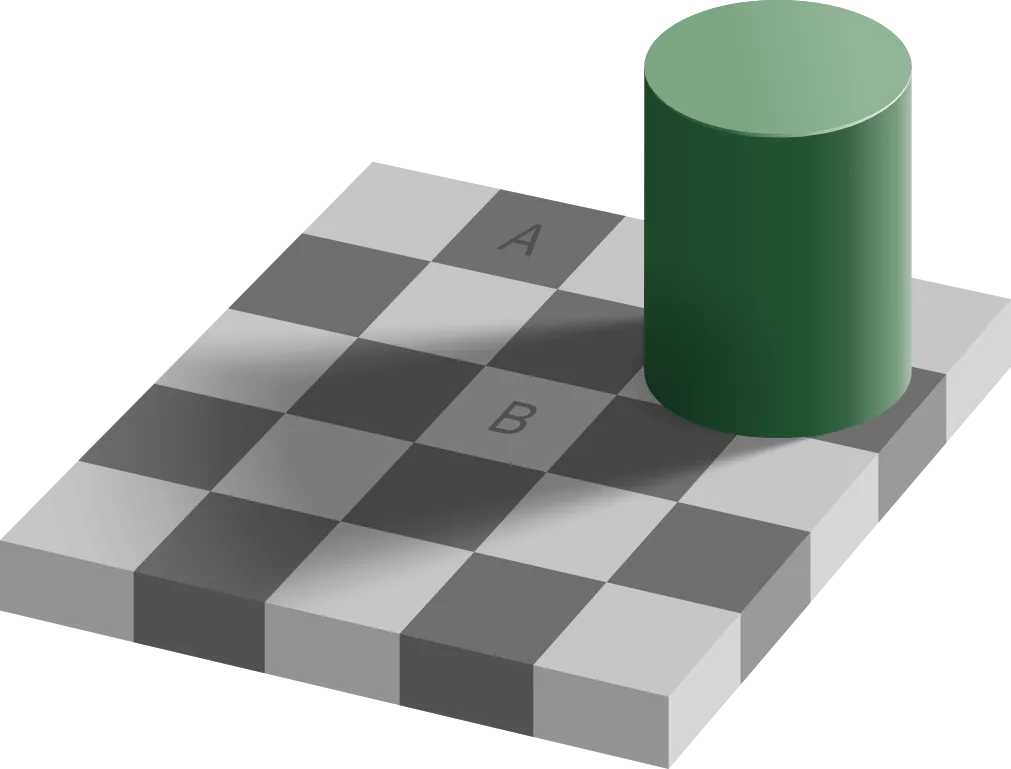

Scientists seem to agree that when we perceive and act and learn, it is efficient and it works well because of all of the experience that we have gained over time – it is all about the “training data”. When we perceive the world with our eyes and ears, we are not running a complete 3D reconstruction of reality all the time – we are using our prior knowledge of what is already familiar to us (“priors”) in order to make as many shortcuts as possible, to quickly figure things out – and then we’re fine-tuning these assumptions (feedback/error correction) with our observations.

Your Predictive Processing engine fails at this task: Your top-down prediction is that square A and square B are different colors, despite your bottom-up senses actually looking at two squares that are the same shade of grey.

This leads us to the idea that the brain is basically a world model machine (or, it’s a single-purpose “world model” machine) whose inputs are the external world (perception), and its own embodied actions (action), based on its current focused task (attention).

A world model is the way a brain encodes the world that allows that creature to thrive in the world. It’s what allows a creature to learn by action, to learn by observing others, to learn by making mistakes, and to, most of the time, correctly predict what is going to happen in the short term, and sometimes in the long term.

So, in short: a world model is what we sometimes call our “mind’s eye”. Our mind’s eye is built from a lifetime of sensory experience.

We build worlds that build minds (that build worlds)

Now, as I said already, we don’t actually know any of this to be true – at least, not the way that we know Newton’s laws of motion or Einstein’s theories of relativity to be true. We are in Plato’s cave with the brain, and only in the last few decades have we had the equipment necessary to peer into its inner workings at a molecular level, and even then, it is complex in terms of sheer scale by orders of magnitude beyond what is possible for us to truly make sense of. But, just as we don’t need to know the movements of individual molecules to create the field of fluid dynamics, it might not matter too much what each individual synapse or dendrite is doing. We are making a lot of progress in small steps. Optical illusions, MRI scans of both healthy people and people with specific conditions (e.g., schizophrenia, autism) or brain damage, reveal a lot about what is or isn’t going on in specific circumstances. I would say that as of 2026, we have some very solid foundations about a lot of specific mechanisms in the brain, but we are still quite far from putting all the pieces together into a coherent “specification” of how it all really works. And yet… lots of people are trying, and increasingly there are two teams working on the problem: Team Brain (the neuroscientists) and Team Machine (the computer scientists). The computer scientists – the AI people – of course have been inspired since the beginning by the brain. “Neural Networks” have been around since the 1960’s, and are inspired by actual neurons, at least in the way they were understood at the time.

Now, if you don’t know how a brain works, how do you build one? Well, it turns out, our brains are so amazing that we invent lots of things without knowing how or why they work. The steam engine was invented by Newcomen in 1712, improved by Mr. Watt in the 1760’s, but it took until 1824 before Mr. Carnot outlined Thermodynamics in the way we understand it today. Humans have been fermenting food and making beer for millennia before we understood what was actually happening. Vaccination started in 1796, from an observation that milkmaids didn’t catch smallpox, well before any idea whatsoever of “the immune system”. And the Wright brothers invented an airplane that worked, through trial and error, using wind tunnels and different wing shapes, until they found a solution that worked.

So while we might never truly understand exactly how our ~1 trillion neurons and ~500 trillion (!) synapses do what they do with such amazing speed and efficiency, we are reaching a plausible explanation of what it might be doing in conceptual terms, in order to simulate a similar outcome using a computer.

Now, the human brain is arguably the most complex thing that exists in our observable universe. In terms of building something that can think and interact with the world, maybe we’re aiming a little bit high by starting there. There is a spectrum of “animals that think”: Worms, insects, jumping spiders, fish, amphibians, rodents, cephalopods, dogs, pigs, corvids, apes, and humans. Can we simulate the brain of a worm? Well, it depends on what you mean by “simulate”. If you mean “duplicate what the actual neurons are doing”, then the answer is more of a “no” than you might realize: one well-known example is that the roundworm Caenorhabditis elegans has 302 neurons, of which 282 of them regulate muscle movements. We have mapped these neurons and their ~50,000 synapses, but we still do not have an explanation of how this worm can move backward. So in terms of reverse-engineering a mind from its components… forget it. But can we simulate the basic behavior of a worm, based on specific feedback loops? I would say that yes, we can. Can we simulate… a dog? I would very much doubt it. I would venture to say that we could set our sights on something between an insect and a rat. Even a rat might be a tall order; rats have goals, desires, and we even know that they dream (dreaming being a clearly essential part of a functioning advanced mind). Just what are we aiming for? We are trying to grow a mind in a computer that can achieve reliable and safe navigation and interaction in the physical world, to start. We don’t need robots that have inner desires or social interactions (yet). We just want to invent something that can perform tasks in unfamiliar circumstances based on past experience, as many animals do.

The minds of all animals are built upon sensory experience: Seeing, touching, hearing, and navigating. An animal raised in a dark room would never be able to function properly for the remainder of its life, due to the lack of prior knowledge gained from sensory experience. The quality of the intelligence that emerges from a brain depends heavily on the quality of the sensory experience, which allows it to learn.

End of Part One- Stay tuned for Part Two!