This article was originally published on LinkedIn by our Co-Founder and CEO, Jeffrey Martin. It is part of a series. See Part One: What is a World Model here.

(100% written by a non-buddhist human – all emdashes are in fact my own)

I’ve been spending the past few weeks studying the mind, the brain, and what we think it is doing, why it works as well as it does, in the grander context of “How are we going to build true AI, and how can I help with that, as an expert in computational vision systems”.

My 19-year-old son said, “If you study this for long enough, you might become religious!” Ok, this did not happen yet, but I have, pretty quickly, found that this material… doesn’t intersect – it collides heavily with a lot of ancient thinking that people have been doing for millennia – it hits some of the fundamental questions about who we are and what defines us in the context of the world as a whole.

We still do not understand some very fundamental things about brains – while many great minds have done incredible experiments, brain scans, observations, and philosophizing – we are slowly understanding pieces here and there, but “the whole package” still very much eludes us. It seems as though we’re still in the “uncovering more questions that answers” phase in this branch of science.

For example, we still aren’t sure what the basic unit of computation is within the brain; we used to think it’s neurons, now a lot of people think it’s not neurons, but rather it’s the synapses. We have around 86 billion neurons and we have 100 trillion synapses, by the way. Each synapse might do a number of different things, communicating with a different type of neurotransmitter.

Over the last decade or two, we are reevaluating what the different parts of the brain do. They are not discrete components, it appears that they’re all involved in lots of things, and all of them are involved in both thought and action.

I am trying to answer a couple of fundamental questions for myself:

- Why can a human mind do things a computer can’t do (make a sandwich, fold laundry, learn how to drive a car in only a few hours, figure out how to perform an unfamiliar task in one or two tries, generally operate and function safely around people), Why can even a rat or dog mind do things a computer can’t do?

- How would we build a robot that actually does these things?

In 2026, we still… have no idea. This, to me, is both astonishing and tremendously exciting. It is, I think, among the most fascinating mysteries that remain in the sphere of human knowledge.

Much of the most recent research about how minds grow and develop and learn, all require the mind to exist within a context: specifically, the mind (the software running on the hardware that is the brain) uses sensory data to evolve from its helpless baby state to incredible (at least sometimes) adult state. Or to put it differently, the mind requires a body, and a world (with other minds in it) to become the thing that it is. You can’t have a mind in isolation. It can only become, by having a body, with that body moving around in the world. You just can’t have it any other way.

Are we designing AI’s like this? Are we designing robots like this? And… isn’t this a whole lot like…. Buddhism?!

Just a quick refresher on Buddhism, in case you forgot: Dependent origination (pratītyasamutpāda) holds that nothing, including the mind, arises independently. Consciousness emerges through the contact between sense organs, objects, and awareness. There’s no self that exists in isolation (anatta).

So on one hand, we don’t know how the mind works exactly, but on the other hand, maybe we have known all along, or at least for the past 2500 years or so. Again, I find it freaky and strange and exciting to see an intersection of neuroscience, robotics, and AI, with such themes of philosophy and religion. It is, I guess, inevitable to some extent.

Now that I’ve revealed the wacky part, let’s try taking it at face value and walk through what it means, step by step.

Babies

How does a baby get from “totally helpless infant” (first days after birth) to “perceiving, acting, moving through the world, planning, and strategizing”? One of the first things that happens is that the baby’s mind calibrates itself to the body that it has. It begins experiencing the world with her eyes and with her hands. Other people’s faces and especially their eyes are very salient (and the brain devotes a lot of space to understanding faces and eyes). Objects can be held and tasted. There is gravity. It hurts to bump into stuff and fall over. Hunger needs to be addressed. Pain should be avoided. There are things that feel good and taste good.

In aggregate, it sounds like we have just a bunch of basic rules. Maybe we do, but it is messier than that, and we are doing things so quickly – perceiving, acting, reacting – that there is something clearly very sophisticated and incredibly efficient going on. Knowing what we know about our eyes, what they actually see, how the eyes move (saccades – lots of jerky movements), it is clear that we are not running a complete 3d scan of our environment at all times, and in fact there is very little that we truly see in detail at any one moment. The “image” of the world around us is built on lots of learned facts about what we already know, and our eyes add detail, confirm assumptions, and make corrections, all on the fly, and very accurately. Likewise, every movement we make is simultaneously acting out our desire, and verifying a prediction we are making about what will or will not happen. We live in a constant cycle of perception and action, learning by doing, doing by learning. What we think and what we are, and where we are (and who we are with) can be viewed somehow as a single system.

Computers

Ok, how does a machine learn? (Flash course on AI!)

The AI world originally in the 1950’s was in two camps: the literalist people (the symbolic tribe), led by Marvin Minsky, who thought that we can just make a bunch of if/then rules to simulate thinking; and the connectionist tribe, led by Frank Rosenblatt and his “perceptron” (the first, simple “neural network”, who posited that our brain is a network of simple units that learn from data.

As it turns out, the world is a noisy and messy place, and the literalist people struggled to make ironclad rules that actually worked properly, while the connectionist folks (revived by Hinton and others in the 1980’s) had a more flexible approach to our messy world, and this seemed to be much more effective for getting a machine to learn something; the neural network, obviously, being inspired by the brain! However loose, primitive, and naive this inspiration may have been, it has grown into most processes that we call “AI”. The breakthrough came about 14 years ago (Alexnet), borne out of our teenage desires for better video games, driving incredible improvements in the parallel processing of GPU’s, making huge (“deep”) neural networks possible: “Deep Learning” was born.

A neural network, like a brain, is something that begins in a state of “not quite ready to do what you want it to do until it has been trained on stuff”. This is where neural networks and brains start to diverge a bit. To train a neural network, you feed it a truly insane quantity of data and let the neural net do a few billion steps of trial-and-error. Humans will be helping by showing the neural network what the result SHOULD be, and the neural network, with its hundreds of intermediate layers and billions of parameters, eventually reshuffles itself in order to probabilistically spit out whatever is the most appropriate thing, based on what it is supposed to do. This is how you make an AI to detect things in photos, for example. LLM’s are even stranger – we just feed them all of the text that has ever been written and ask it to figure out how to predict the next word. We also have mechanisms for human (and automated) feedback in order to refine this process. The fact that LLMs (and the particular architecture behind it, the Transformer, in 2017, “Attention is All you Need”) work so well, truly did surprise everyone – I mean everyone, even the most optimistic and deeply involved computer scientists in the field.

Can we get a machine to learn the way a baby does?

Yes, maybe. I don’t know. Has anyone been thinking about this or working on it? Yes: Rodney Brooks, Karl Friston, Yann Lecun, and others. But it is still very much an unsolved problem. What does this even mean, to have a machine learn the way a baby does? In organisms, we have two processes – evolution and development – in the machine learning / AI world, we kind of combine both of those things into something we call “training”. With brains, evolution has given us the architecture of our brain, with its particular latent structure and potential for certain capabilities of perception, thought, and action; the “training” and “production” are very much piecewise and concurrent, with existential consequences (don’t die).

Can we simulate it?

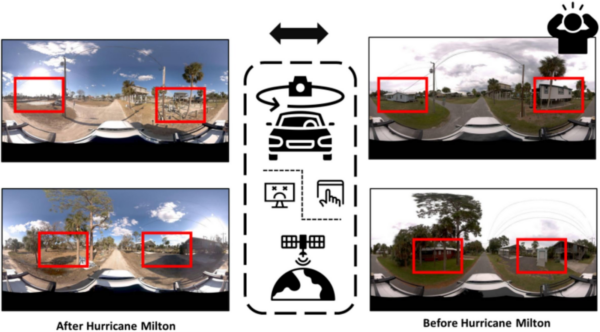

This is where things get uncomfortably philosophical again, as things seem to do when we are talking about what exactly the mind really is, how it maps to the brain, and “who are we exactly” kinds of topics. We are, as humans, running a simulation of the world as we understand it, in our head, nonstop (even when dreaming, while that’s by design disconnected from our live senses). We have already established quite definitively that we are not directly experiencing the world, but rather filtering it through assumptions and predictions, in order to dramatically simplify it and allow us to act quickly on what is salient at that moment in time. So the question might rather be, “how many layers of abstraction can we simulate before it stops making any practical sense?” Instead of building a team of baby robots and unleashing them on the world to figure things out, can we do the same, but confined to a virtual world, some kind of video game? This would of course allow us to do it a million times, for a million years. In the spirit of the Bitter Lesson (more on that in the next post), the answer is probably yes we should.

While we are asking “can we teach embodied robots how to act by running them in a simulation”, it raises the question: Do we know what we need to simulate? This gets us a bit deeper into the problem. If we did know what we needed to simulate, we might not need to simulate it at all. But still, up to a certain level, there are likely benefits to running simulations up to a level of fidelity (and noise) that current computation is capable of handling.

We know that the real world is messy and complex beyond what we can simulate, so I don’t think this is an either-or question. We can and should do both.

What is missing from the machine who is learning? Mortality, desire, pain, pleasure?

It is hard, with a straight face, to ask this question, dear reader. It was not my intention to get to this question so quickly after starting my studies of neuroscience and theory of mind. And yet, here we are. It might be useful to turn this around and ask it another way. Maybe our own intelligence has become as impressive as it is due to our impressively complex desires, emotions, and social structures? Could it happen any other way? That’s the question that we don’t know. Probably it could, but we haven’t seen it yet, so we just don’t know. I would say that while it is possible to make a convincing simulation of an embodied intelligent creature that lacks these desires and emotions, it will in fact be much more difficult and failure-prone to do so. I posit that it is just as important for an intelligent being to have emotions as it is to have a body.

We need to do this in the real world with real data, however slow and messy that may be

As Andrej Karpathy has commented recently, the current LLMs are not people, they’re more like ghosts. This essay supports that notion. While there is an extremely useful and impressive level of… “simulated reasoning” available from today’s LLMs, they are not the full answer to the question of “can we build an intelligent embodied mind”.

If our goal is to build artificial beings which can function and thrive in the physical world, and live safely among people, then we do need something fundamentally different. We’ve got a “brain in a jar” that consumes just words – this is one kind of intelligence, and it works for certain things but not others. It’s the “others” part that this essay is about. The world has no words in it, but intelligence emerges from it, from a brain that can make high level abstractions about the physical world around it, about its own body, about the bodies and minds of others, informed by its own desires and needs, the desires and needs of others, and whatever goals it has. So yes, to make a pie from scratch you need to build a universe. And to build true embodied artificial intelligence, you need embodied beings in a physical world, learning from each other. We can’t have one without the other – or at least, we know that this works in our world, so it makes sense to replicate it. Just as we’ve been inspired by neurons to make our AI neural networks, so should we be inspired by the physical reality of embodied intelligence, to build our own artificial embodied intelligence. We, as people, would be nothing without our bodies, the world around us, our friends and family, and our own desires, goals, and emotions. Let’s build the Buddhist Robots and see what happens.