LLMs will not achieve AGI. We need something else, but where will the training data come from?

This article was originally published on LinkedIn by our Co-Founder and CEO, Jeffrey Martin.

Artificial General Intelligence will not happen with our current language-based (LLM) paradigms.

The natural world has no words in it. All animals, from rats to dogs to humans, can navigate, plan, and achieve goals within the world without words. On these grounds, it’s safe to say that chasing the path of language specifically, to achieve general intelligence, is not the right path.

All animals, as well as humans until a young age, live and thrive in a world without using language, without using words. How they do so is not fully solved, but we have made considerable progress in the last few decades in understanding how an embodied agent navigates the world, understands it, and balances predictions with observations. Some of the latest research (what neuroscientists call “predictive processing”) suggests that we are not simply looking around and building a 3D model of our environment, and then deciding what to do – rather, we are constantly in a fast loop of

- 1) making assumptions about the world around us and

- 2) fine-tuning it according to our observations – confirming our presumptions, or fine tuning it based on error – and essentially adjusting these assumptions of the world.

We are, essentially, running on a slightly delayed hallucination of our reality, and nudging it into a better accuracy as we move through it; we don’t observe the world as much as we observe what doesn’t match our expectations, kind of a more efficient error encoding. But what, exactly, does the brain do, and how? I’ll get more into that in the future: what is known, what we’re guessing, what remains to be solved, and the gap between animal and machine.

Yann Lecun’s proposed JEPA architecture aims to replicate some of the latest theories around the basic structure and feedback loop of “this is the world as I understand it, here is the information I need to consider and I can forget all the other stuff, here is what I observe now, this is what I think is going to happen, and this is what I should do NOW” sequence of thoughts. This is a great place to start, and it is thrilling to see one of the greatest minds in the history of AI rejecting the LLM hype and pursuing something genuinely important and unsolved. Certainly, it is a bold proposal that is NOT a transformer architecture, it is NOT a naive bottom-up approach of simply building a 3D model of the world via sensor data, and it does more closely match the latest research about what exactly the brain is doing in such a fantastically economical and successful way.

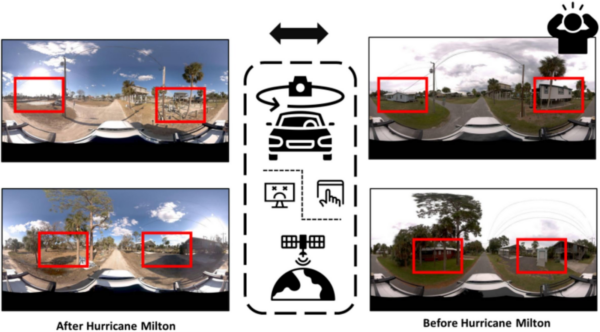

Now, here is my question that I don’t see people asking enough: what should be the training data for this? It’s not text, and I don’t think it should be youtube videos (too unstructured) or even autonomous car training data (too specific). So what should it be? It should be a massive, truly massive, not-yet-existing, highly varied dataset of multi-modal, calibrated, accurate sensor data. Just as a human baby learns everything about how to move through the world via her own multi-sensory experience, so should our world models utilize accurate sensor data that offers high confidence and reliability.

So: where are we going to get this data from?

Throughout the history of scientific breakthroughs, it has often happened that multiple people or teams solved the same problem at nearly the same time. Somehow, the world is just ready for that next advance…. And right now there seems to be a race heating up between some of the brightest and most brilliant minds in computer science, including Fei-Fei Li of World Labs, and Yann Lecun of AMI Labs, both of whom have raised substantial sums, and are attracting serious talent in service to trying to solve this puzzle. And notably, this puzzle is not part of the Large Language Model hype – it is most definitely a separate problem. But in addition to money, talent, and research, what else do we need to solve this problem? Well, we need training data, and lots of it. We need to remember that the revolution of transformers and LLMs happened in part because the training data for that was readily available – copyright debates aside, the text was quite literally sitting around and piling up, ready to use – it seems to be a general assumption that any other training data will just kind of happen by itself, or that we can just find it somewhere, and whatever we have should be enough and usable. Well, I don’t think it is. We need huge volumes of specific data that just doesn’t exist yet. I’m not theorizing this gap, I have been living in it.

I’ve been creating spatial data processes, platforms, and hardware for 20 years. I made one of the first geospatial websites, combining 360 images and maps, predating google maps and streetview. I have a Guinness world record in 360 degree imaging. Mosaic has shipped geospatial capture systems to customers in over 50 countries and counting. This is what I have always been doing. I didn’t set out to capture data in order to build an artificial brain, I set out to document the world. But maybe these are the same thing.

I believe that the next big prize in AI involves solving something that the animal brain solved with amazing efficiency and success millions of years ago, but that we still don’t fully understand. And I believe this prize will be won not only via a smart new architecture (maybe JEPA, maybe something else), but via the right kind of training data that allows us to test and deploy this architecture.

Even considering all of this, there are many questions remaining to be answered from all sides: What specifically is this highly accurate, calibrated training data, and what is the minimum viable structure? What exactly is this abstraction of the world that we hold in our mind’s eye? Will this whole solution of world models be something that is actually simple, once we figure it out, or will it be something that humans do not even fully understand? I hope to dive into these, and other questions soon. Stay tuned.